三、adam优化算法的基本机制 adam 算法和传统的随机梯度下降不同。随机梯度下降保持单一的学习率(即 alpha)更新所有的权重,学习率在训练过程中并不会改变。而 adam 通过计算梯. Adam: adam优化算法基本上就是将 momentum和 rmsprop结合在一起。 前面已经了解了momentum和rmsprop,那么现在直接给出adam的更新策略, ==adam算法结合了.

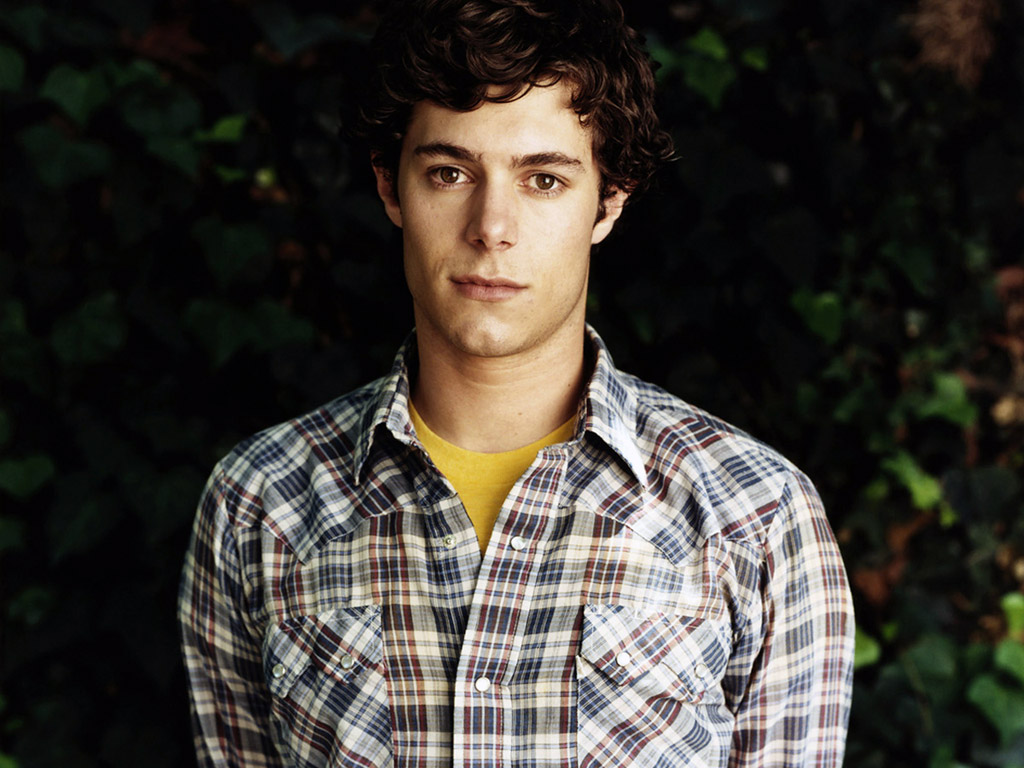

Adam Brody Profile & 2011 Wallpapers All About Hollywood

Editor's Choice

- Toni Braxtons Unbreak My Heart Dress A Timeless Fashion Icon Brxton Zippyshre Coolrfile

- The Unforgettable Journey Of The First Year Of American Idol 10 Winners & Ir Most Iconic Songs

- Simone Biles And Shaquille Oneal A Powerful Partnership In Sports And Beyond ' Photo With O'nel Reches New Virl Heights

- Exploring Ed Helms Relationships The Man Behind The Laughter 8 Things You Didn’t Know About Hangover’s Tvovermind

- Lego Net Worth Exploring The Value Behind The Iconic Toy Brand 2025 Joseph Thomas